Streamlining Data Flow in an E-commerce Analytics Dashboard

Imagine trying to understand customer behavior on your e-commerce site but being swamped by the sheer volume of data. That's the problem the ecommerce-analytics-dashboard project aims to solve: presenting key metrics in an understandable format. Let's explore how recent work focuses on refining the data processing pipeline.

The Goal

The primary aim is to optimize how data is fetched, processed, and displayed in the dashboard. Efficiency here translates directly to faster loading times and a better user experience when viewing analytics.

Data Transformation

Rather than directly using raw data, the team is focusing on transforming the data to fit the specific needs of the dashboard's visualizations. This involves:

- Aggregation: Combining granular data points into meaningful summaries (e.g., daily sales totals from individual transactions).

- Filtering: Selecting only the relevant data based on criteria like date ranges or product categories.

- Formatting: Converting data into the correct formats for charts and tables.

Consider this Python example of aggregating sales data:

def aggregate_sales(sales_data):

daily_totals = {}

for sale in sales_data:

date = sale['date']

amount = sale['amount']

if date in daily_totals:

daily_totals[date] += amount

else:

daily_totals[date] = amount

return daily_totals

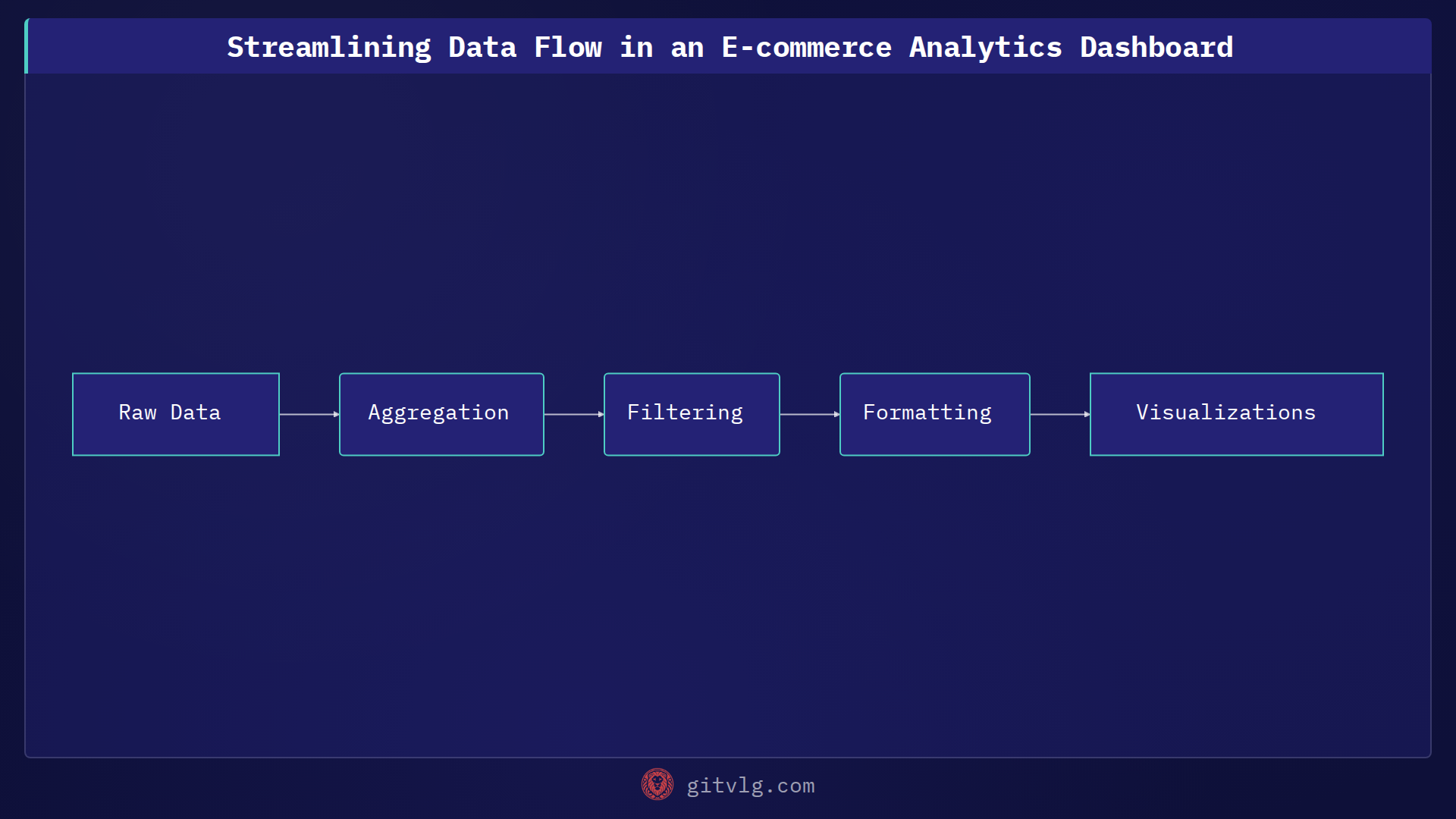

Visualizing the Data Pipeline

Thinking of the data flow as a pipeline helps optimize each stage for maximum efficiency. The raw data flows through processing steps, transformations, and finally into visualizations. By understanding this pipeline, developers can pinpoint bottlenecks and prioritize optimization efforts.

Actionable Takeaway

When building dashboards, remember that raw data is rarely visualization-ready. Invest time in data transformation to optimize performance and present data in a clear, concise manner. This might involve aggregation, filtering, or reformatting the data to match the expected input of charting libraries.

Generated with Gitvlg.com